Quick answer: Accreditation, institutional effectiveness, and strategic planning should work as one system: the institution sets priorities, defines expected outcomes, measures performance, reviews results, and uses that evidence to improve decisions and allocate resources.

Use this guide if: plan refreshes, self-study work, annual reporting, and leadership updates all feel like separate projects and evidence is hard to retrieve across cycles.

Operator note: These functions often sit in different offices, but accreditors and leadership do not experience them as separate worlds. If planning, assessment, evidence, and decision-making are fragmented, the institution feels that fragmentation during self-study, annual reporting, and leadership review.

You know it is working when:

- Strategic priorities map clearly to measures, targets, and review processes.

- Assessment results are visible enough to inform leadership decisions.

- Resource allocation and improvement actions can be traced back to evidence.

- Accreditation preparation feels like organized retrieval, not last-minute reconstruction.

In this guide:

- How accreditation, institutional effectiveness, and strategic planning relate

- What accreditors typically care about in practice

- How to build a cleaner evidence chain

- Common breakdowns institutions experience

- A practical operating model

- FAQs

How do accreditation, institutional effectiveness, and strategic planning fit together?

They are three views of the same institutional system.

- Strategic planning sets direction and priorities

- Institutional effectiveness defines how the institution will measure progress and evaluate performance

- Accreditation tests whether those processes are systematic, documented, and used for improvement

When those three functions are aligned, institutions can explain not just what they planned, but how they assessed progress and what decisions resulted.

What this page is not

This is not an argument for more paperwork. It is an operating model. Accreditation support work gets lighter when planning, effectiveness, and evidence retrieval are part of the same system, not when each office creates more templates.

What do accreditors actually want to see?

Accreditors vary, but the core pattern is consistent. They want to see evidence that planning and effectiveness processes are documented, linked to mission, connected to decision-making, and used for continuous improvement.

That usually means an institution should be able to answer:

- What are our goals and expected outcomes?

- How are those outcomes measured?

- Who reviews the evidence?

- How do results inform action, budgeting, or improvement?

- How is that process sustained over time?

What is the cleanest way to connect these functions?

Build an explicit evidence chain:

- Strategic priority

- Expected outcome or success definition

- Measure or evidence source

- Owner or steward

- Review cadence

- Decision, action, or improvement note

If you can show that chain, accreditation support becomes much easier because the institution is not trying to reverse-engineer connections across separate artifacts.

What to do first

Choose one institutional priority and map it end to end: priority, expected outcome, evidence source, steward, review body, and action taken. If you cannot do that for one priority, doing it for the full institution will stay messy.

Where do institutions break down most often?

- Planning lives in one file, assessment in another, and decisions in meeting notes.

- Measures exist, but owners and definitions are unclear.

- Evidence is gathered for accreditation but not used in operating cadence.

- Units are doing planning and assessment work, but there is no enterprise view.

- Leadership sees strategy updates, while accreditation teams see evidence binders, with too little overlap between them.

How should leadership structure this work?

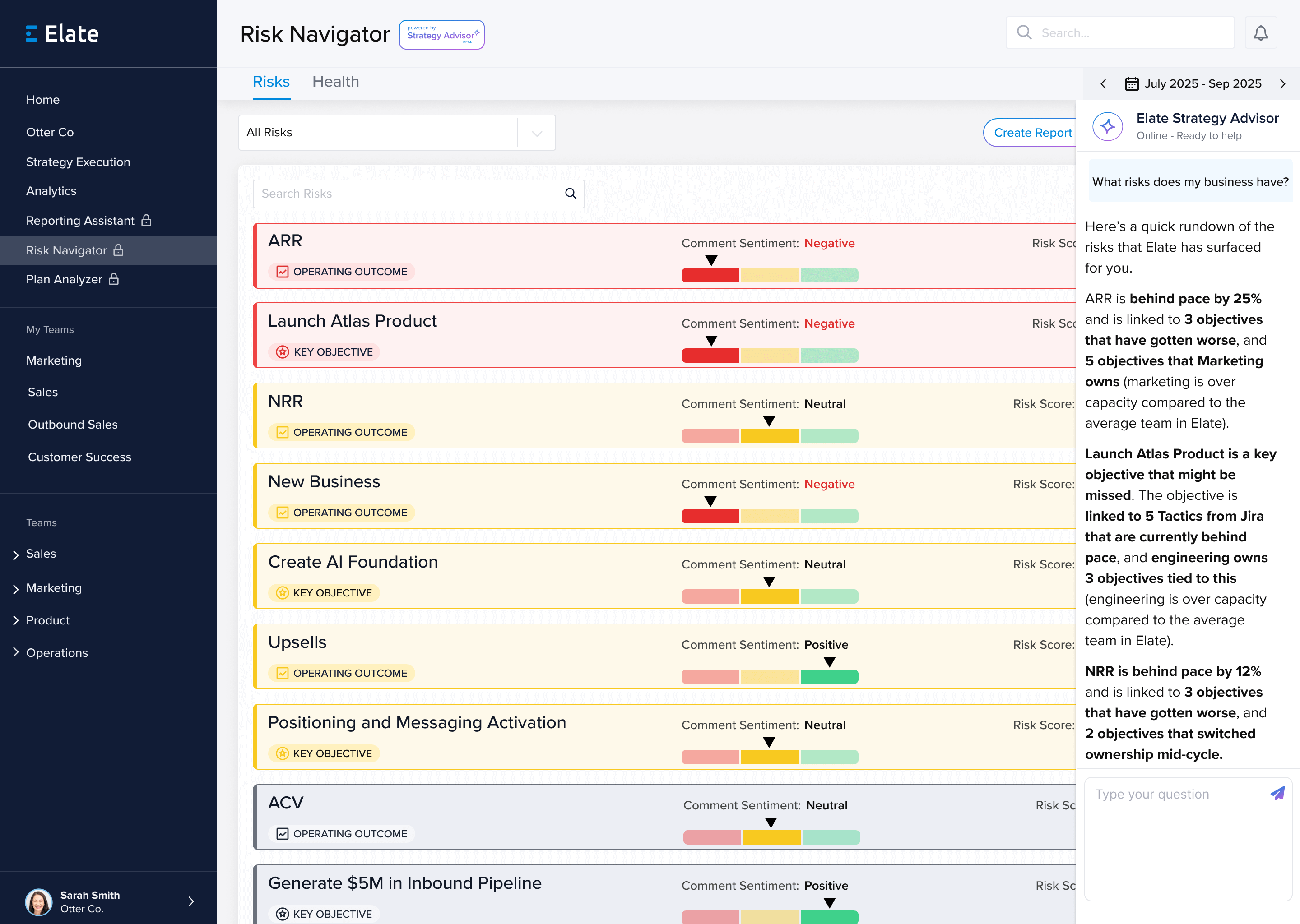

A practical model is to keep the formal evidence system and the executive review system connected but distinct.

- Institutional effectiveness dashboard: measure definitions, targets, evidence, stewards

- Strategic plan dashboard: leadership-facing progress and risk view

- Progress report and board reporting: audience-specific packaging of the same underlying logic

That structure creates less reporting friction and makes accreditation support work more sustainable.

Copy/paste template: accreditation, institutional effectiveness, and strategic planning map

Example scenario: A university wants to show how a student success priority connects from plan to evidence to action. Instead of storing that logic in separate folders, the institution maps the priority to its measure, steward, review body, and resulting improvement action in one repeatable format.

Strategic priority: [goal or pillar]

Expected outcome: [what success looks like]

Measure or evidence source: [metric, assessment, survey, report]

Steward: [office or role]

Review body / cadence: [committee or leadership group + frequency]

Recent result: [brief result summary]

Action taken: [decision or improvement action]

Accreditation relevance: [how this supports institutional evidence]

External references

- SACSCOC Principles of Accreditation

- Middle States standards overview

- Queensborough Community College planning and assessment

- Harper College institutional effectiveness dashboard

FAQs

Do accreditors require a dashboard?

No. They require evidence of a functioning system. A dashboard is useful because it makes that system easier to run and easier to explain.

Should accreditation work be owned only by the accreditation office?

No. Accreditation touches the whole institution. A central office can coordinate it, but the evidence chain has to live across planning, assessment, and leadership review.

What is the fastest way to reduce accreditation reporting friction?

Standardize your priority-to-measure-to-action logic. That tends to remove more pain than adding another document template.

Need planning, reporting, and evidence to feel like one system instead of three separate projects? Elate helps institutions connect priorities, measures, ownership, and reporting so strategy and evidence stay easier to retrieve and review.

See institutional effectiveness dashboards, see the strategic plan dashboard guide, or explore Elate for higher education.